knitr::include_graphics("../images/common_statistical_tests_are_linear_models_cheat_sheet.png")

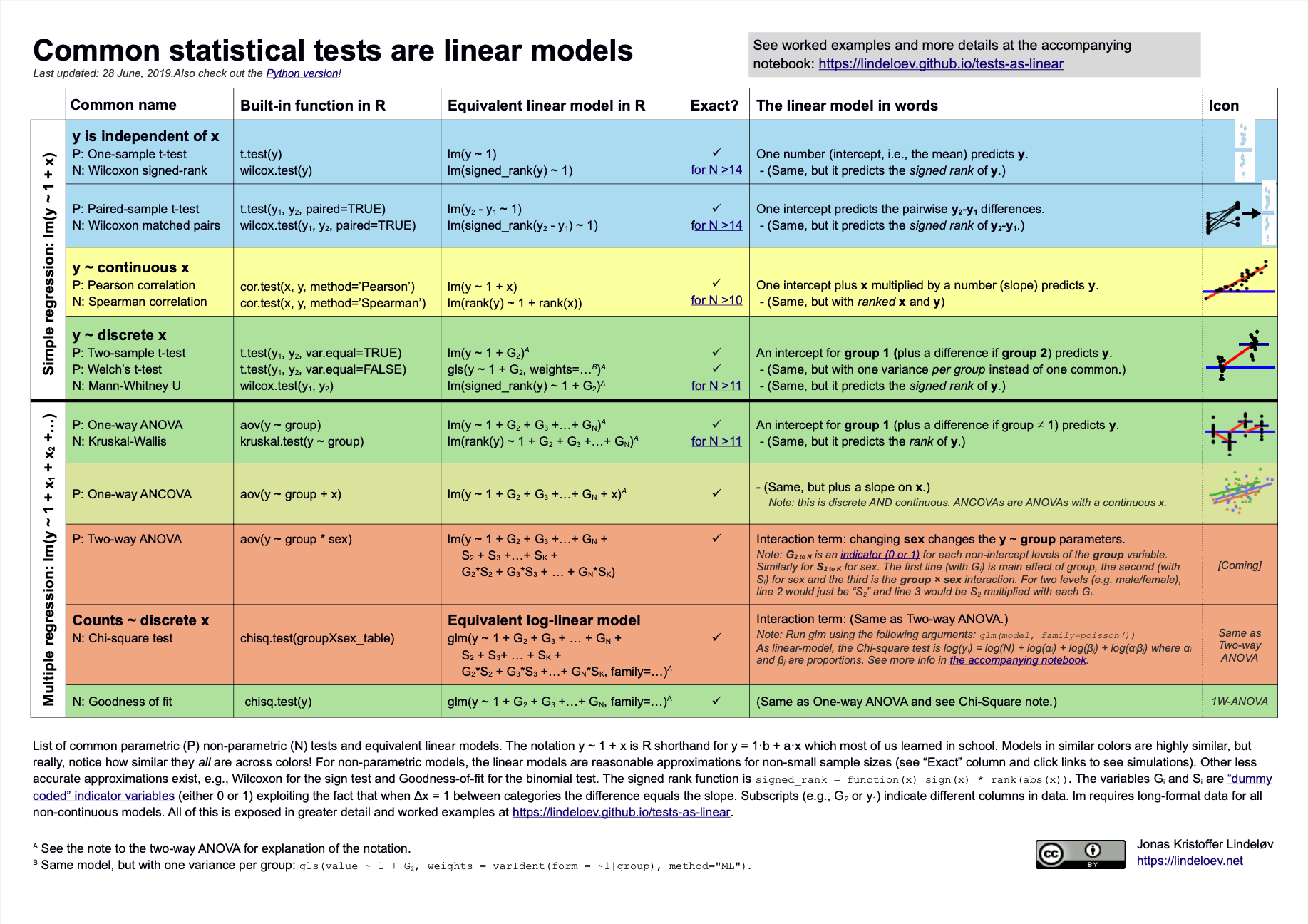

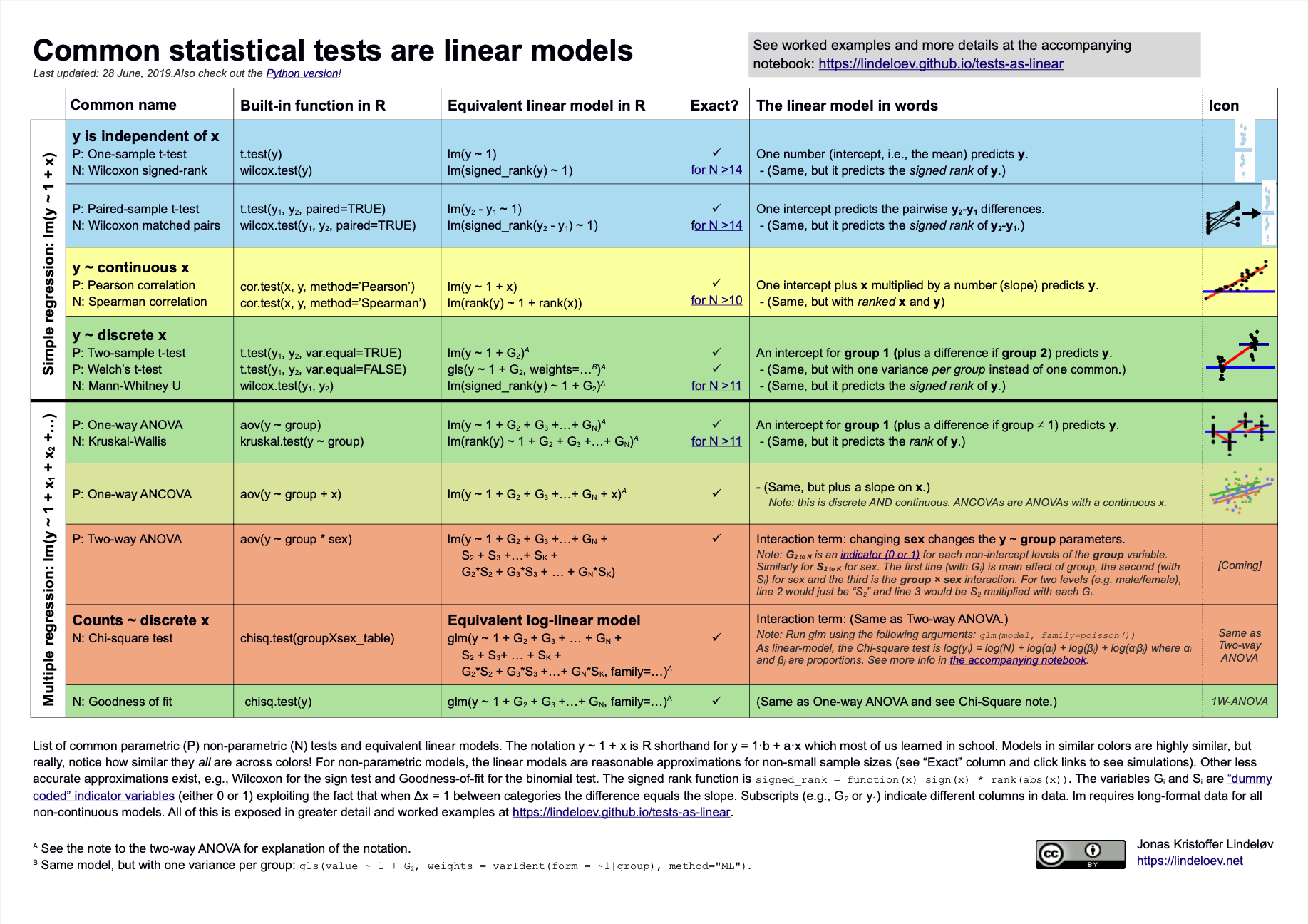

What is the relationship between a linear regression, a Student’s t-test and a Wilcox rank-sum test? Or between linear regression and ANOVA?

What is the difference between a hypothesis test like a Student’s t-test and a test of assumptions such as Shapiro-Wilk’s test (for normality) or Levene’s test (for homogeneity of variances)?

Almost everything is a linear model.

knitr::include_graphics("../images/common_statistical_tests_are_linear_models_cheat_sheet.png")

What do most statistics textbooks tell you to do when trying to test if two groups’ means differ?

Why? What benefits are there for doing so? Or what bad things happen if you don’t?

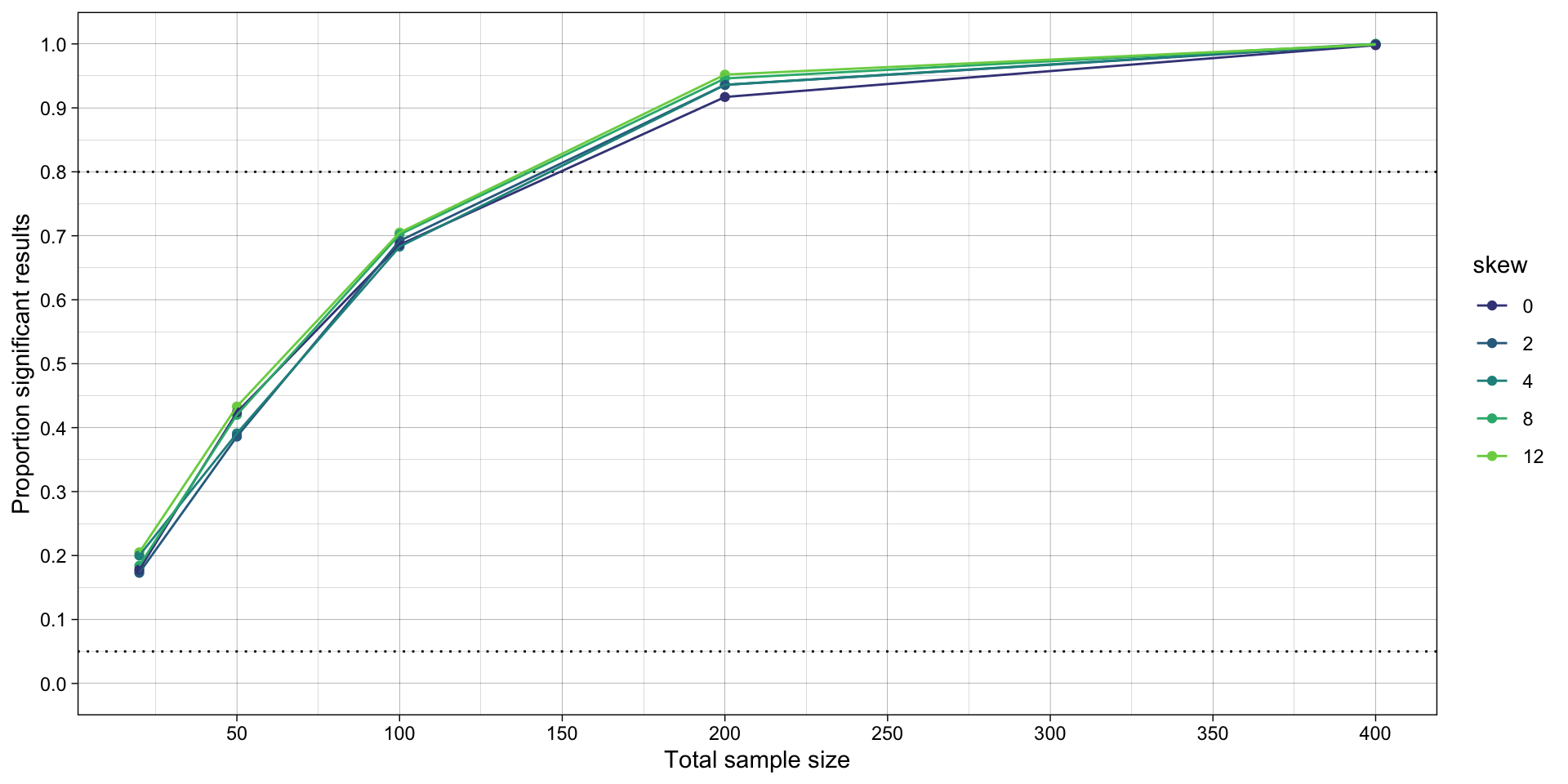

In a previous session, we observed that violations of the assumption of normality actually has very little impact on the false-positive and false-negative rates of a t-test, as long as the two conditions have similarly non-normal data, which is plausible in many situations. This lesson seeks to answer two related questions:

# dependencies

library(tidyr)

library(dplyr)

library(forcats)

library(readr)

library(purrr)

library(furrr)

library(ggplot2)

library(scales)

library(sn)

# set up parallelization

plan(multisession)Code taken from the previous lesson, with some simplifications.

# remove all objects from environment ----

rm(list = ls())

# define data generating function ----

generate_data <- function(n,

location_control, # location, akin to mean

location_intervention,

scale, # scale, akin to SD

skew) { # slant/skew. When 0, produces normal/gaussian data

data_control <-

tibble(condition = "control",

score = rsn(n = n,

xi = location_control, # location, akin to mean

omega = scale, # scale, akin to SD

alpha = skew)) # slant/skew. When 0, produces normal/gaussian data

data_intervention <-

tibble(condition = "intervention",

score = rsn(n = n,

xi = location_intervention, # location, akin to mean

omega = scale, # scale, akin to SD

alpha = skew)) # slant/skew. When 0, produces normal/gaussian data

data <- bind_rows(data_control,

data_intervention)

return(data)

}

# define data analysis function ----

analyze_data_students_t <- function(data) {

hypothesis_test_students_t <- t.test(formula = score ~ condition,

data = data,

var.equal = TRUE,

alternative = "two.sided")

res <- tibble(hypothesis_test_p_students_t = hypothesis_test_students_t$p.value)

return(res)

}

# set the seed ----

# for the pseudo random number generator to make results reproducible

set.seed(42)

# define experiment parameters ----

experiment_parameters <- expand_grid(

n = c(10, 25, 50, 100, 200),

mu_control = 0,

mu_intervention = 0.5,

sigma = 1,

skew = c(0, 2, 4, 8, 12), # slant/skew. When 0, produces normal/gaussian data

iteration = 1:1000

) |>

# calculate skew-normal parameters

# don't worry about the math, it's not important to understand

mutate(delta = skew / sqrt(1 + skew^2),

scale = sigma / sqrt(1 - 2 * delta^2 / pi),

location_control = mu_control - scale * delta * sqrt(2 / pi),

location_intervention = mu_intervention - scale * delta * sqrt(2 / pi))

# run simulation ----

simulation <-

# using the experiment parameters

experiment_parameters |>

# generate data using the data generating function and the parameters relevant to data generation

mutate(generated_data = future_pmap(.l = list(n = n,

location_control = location_control,

location_intervention = location_intervention,

scale = scale,

skew = skew),

.f = generate_data,

.progress = TRUE,

.options = furrr_options(seed = TRUE))) |>

# apply the analysis function to the generated data using the parameters relevant to analysis

mutate(analysis_results_students_t = future_pmap(.l = list(data = generated_data),

.f = analyze_data_students_t,

.progress = TRUE,

.options = furrr_options(seed = TRUE)))

# summarise simulation results over the iterations ----

## ie what proportion of p values are significant (< .05)

simulation_summary <- simulation |>

unnest(analysis_results_students_t) |>

mutate(skew = as.factor(skew)) |>

group_by(n,

location_control,

location_intervention,

scale,

skew) |>

summarize(proportion_significant_students_t = mean(hypothesis_test_p_students_t < .05),

.groups = "drop")

# plot

ggplot(simulation_summary, aes(n*2, proportion_significant_students_t, color = skew)) +

geom_hline(yintercept = 0.80, linetype = "dotted") +

geom_hline(yintercept = 0.05, linetype = "dotted") +

geom_point() +

geom_line() +

scale_x_continuous(breaks = breaks_pretty(n = 9), name = "Total sample size") +

scale_y_continuous(limits = c(0, 1), breaks = breaks_pretty(n = 9), name = "Proportion significant results") +

theme_linedraw() +

scale_color_viridis_d(begin = 0.2, end = 0.8)

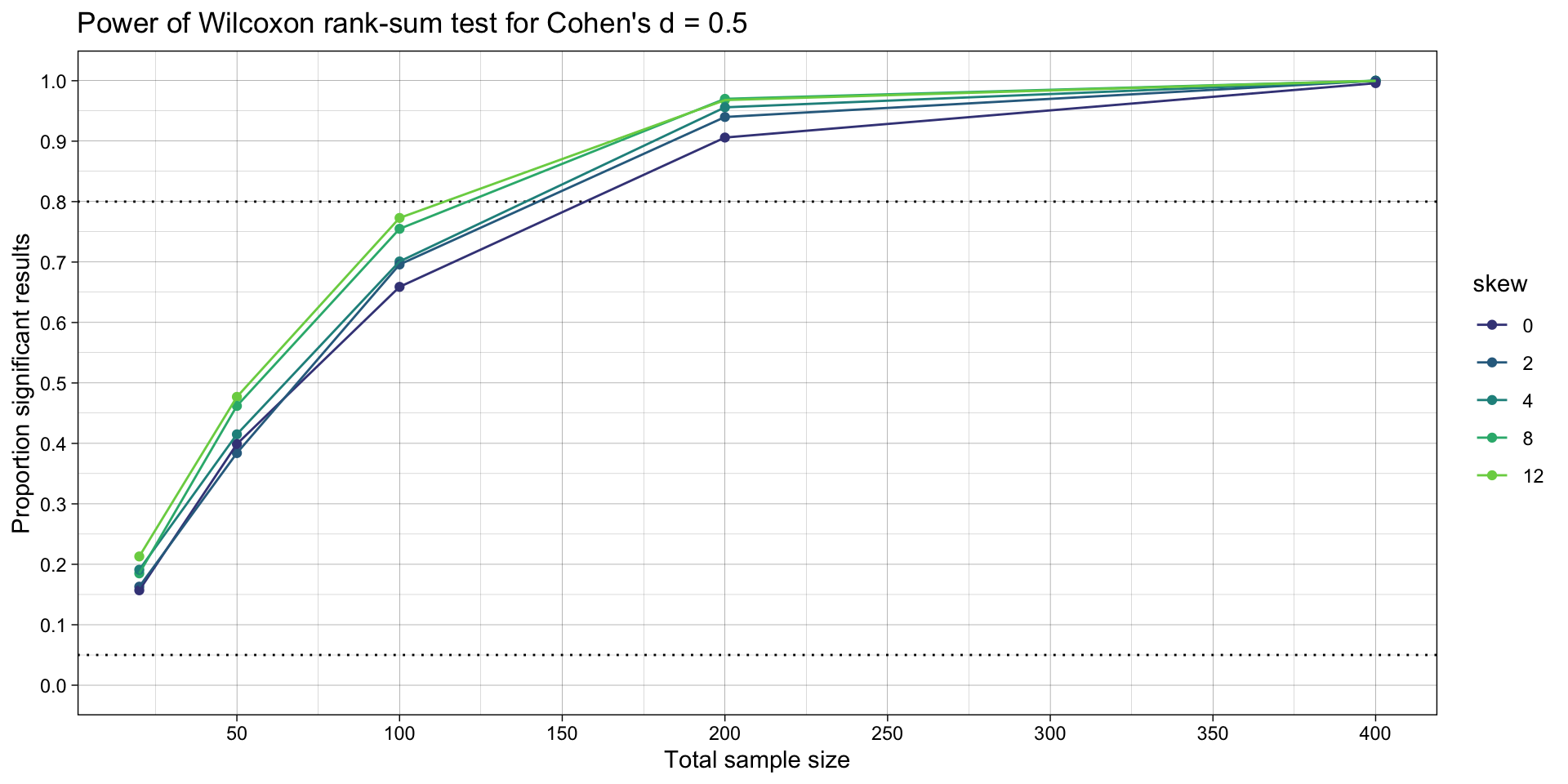

Aka Mann-Whitney U-test.

Write a function called analyze_data_wilcox() that uses wilcox.test() instead of t.test() to compare the differences in central tendency between the conditions.

# define data analysis function ----

analyze_data_wilcox <- function(data) {

hypothesis_test_wilcox <- wilcox.test(formula = score ~ condition, # added

data = data,

alternative = "two.sided")

res <- tibble(hypothesis_test_p_wilcox = hypothesis_test_wilcox$p.value) # added

return(res)

}# run simulation ----

simulation_alt_analysis <-

# using the experiment parameters

simulation |>

# apply the analysis function to the generated data using the parameters relevant to analysis

mutate(analysis_results_wilcox = future_pmap(.l = list(data = generated_data),

.f = analyze_data_wilcox,

.progress = TRUE,

.options = furrr_options(seed = TRUE)))

# summarise simulation results over the iterations ----

## ie what proportion of p values are significant (< .05)

simulation_summary <- simulation_alt_analysis |>

unnest(analysis_results_students_t) |>

unnest(analysis_results_wilcox) |>

mutate(skew = as.factor(skew)) |>

group_by(n,

location_control,

location_intervention,

scale,

skew) |>

summarize(proportion_significant_students_t = mean(hypothesis_test_p_students_t < .05),

proportion_significant_wilcox = mean(hypothesis_test_p_wilcox < .05),

.groups = "drop")

# plots

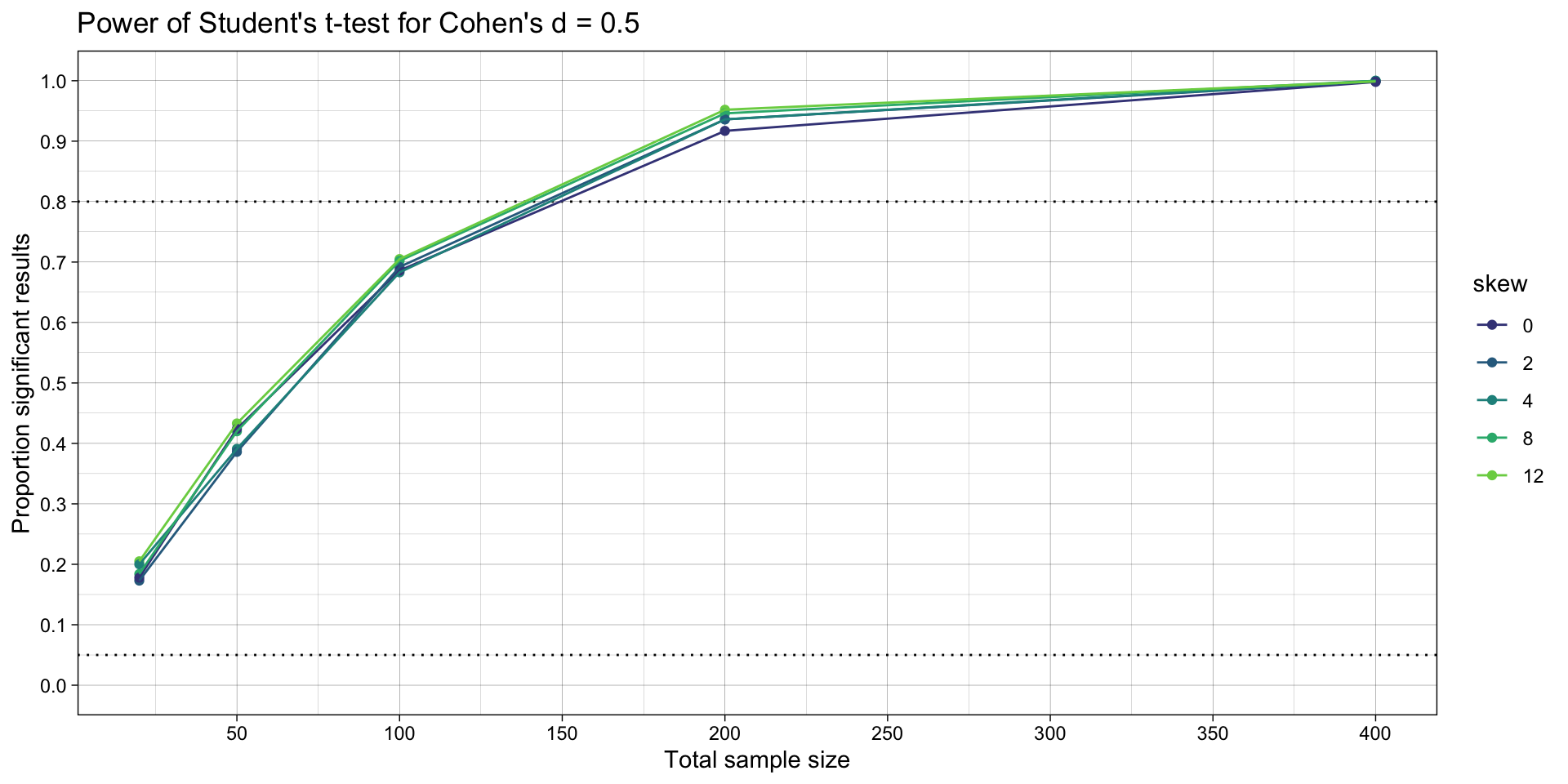

ggplot(simulation_summary, aes(n*2, proportion_significant_students_t, color = skew)) +

geom_hline(yintercept = 0.80, linetype = "dotted") +

geom_hline(yintercept = 0.05, linetype = "dotted") +

geom_point() +

geom_line() +

scale_x_continuous(breaks = breaks_pretty(n = 9), name = "Total sample size") +

scale_y_continuous(limits = c(0, 1), breaks = breaks_pretty(n = 9), name = "Proportion significant results") +

theme_linedraw() +

scale_color_viridis_d(begin = 0.2, end = 0.8) +

ggtitle("Power of Student's t-test for Cohen's d = 0.5")

ggplot(simulation_summary, aes(n*2, proportion_significant_wilcox, color = skew)) +

geom_hline(yintercept = 0.80, linetype = "dotted") +

geom_hline(yintercept = 0.05, linetype = "dotted") +

geom_point() +

geom_line() +

scale_x_continuous(breaks = breaks_pretty(n = 9), name = "Total sample size") +

scale_y_continuous(limits = c(0, 1), breaks = breaks_pretty(n = 9), name = "Proportion significant results") +

theme_linedraw() +

scale_color_viridis_d(begin = 0.2, end = 0.8) +

ggtitle("Power of Wilcoxon rank-sum test for Cohen's d = 0.5")

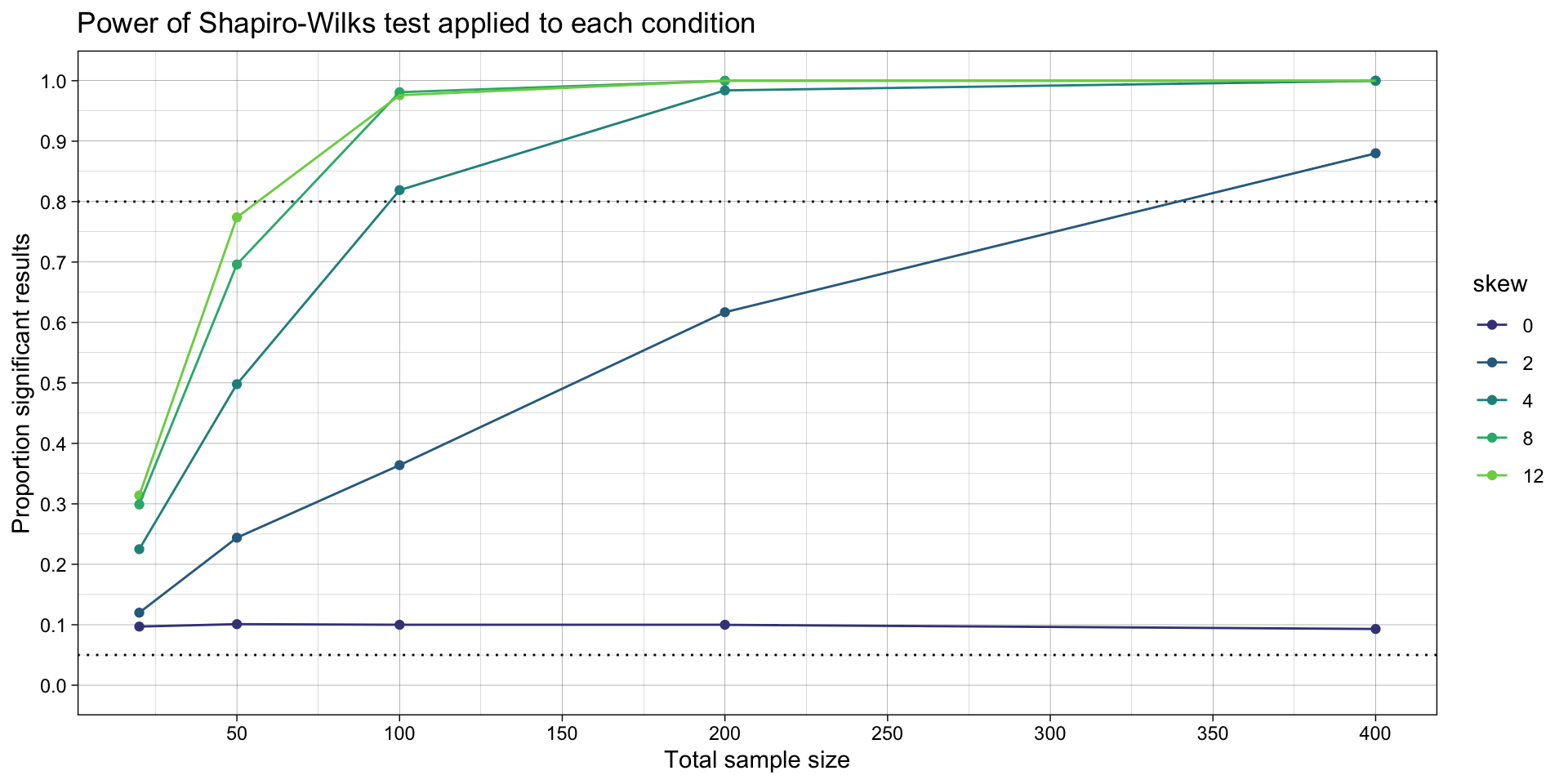

Write a function called test_assumption_of_normality() that uses shapiro.test() to assess for non-normality in each condition (control and intervention). It should return a p value for both tests.

# define data analysis function ----

test_assumption_of_normality <- function(data) {

fit_intervention <- data |>

filter(condition == "intervention") |>

pull(score) |>

shapiro.test()

fit_control <- data |>

filter(condition == "control") |>

pull(score) |>

shapiro.test()

res <- tibble(assumption_test_p_sharpirowilks_intervention = fit_intervention$p.value,

assumption_test_p_sharpirowilks_control = fit_control$p.value)

return(res)

}Test for normality in each of two samples, and return a decision of non-normality if it is found in either.

# run simulation ----

simulation_normality <-

# using the experiment parameters

simulation_alt_analysis |>

# apply the analysis function to the generated data using the parameters relevant to analysis

mutate(analysis_results_normality = future_pmap(.l = list(generated_data),

.f = test_assumption_of_normality,

.progress = TRUE,

.options = furrr_options(seed = TRUE)))

# summarise simulation results over the iterations ----

## ie what proportion of p values are significant (< .05)

simulation_summary <- simulation_normality |>

unnest(analysis_results_students_t) |>

unnest(analysis_results_wilcox) |>

unnest(analysis_results_normality) |>

mutate(skew = as.factor(skew)) |>

# choose the lower of the two shapiro wilk's tests' p values

mutate(assumption_test_p_sharpirowilks_lower = ifelse(assumption_test_p_sharpirowilks_intervention < assumption_test_p_sharpirowilks_control,

assumption_test_p_sharpirowilks_intervention,

assumption_test_p_sharpirowilks_control)) |>

group_by(n,

location_control,

location_intervention,

scale,

skew) |>

summarize(proportion_significant_students_t = mean(hypothesis_test_p_students_t < .05),

proportion_significant_wilcox = mean(hypothesis_test_p_wilcox < .05),

proportion_significant_shapirowilks = mean(assumption_test_p_sharpirowilks_lower < .05),

.groups = "drop")

# plot

ggplot(simulation_summary, aes(n*2, proportion_significant_shapirowilks, color = skew)) +

geom_hline(yintercept = 0.80, linetype = "dotted") +

geom_hline(yintercept = 0.05, linetype = "dotted") +

geom_point() +

geom_line() +

scale_x_continuous(breaks = breaks_pretty(n = 9), name = "Total sample size") +

scale_y_continuous(limits = c(0, 1), breaks = breaks_pretty(n = 9), name = "Proportion significant results") +

theme_linedraw() +

scale_color_viridis_d(begin = 0.2, end = 0.8) +

ggtitle("Power of Shapiro-Wilks test applied to each condition")

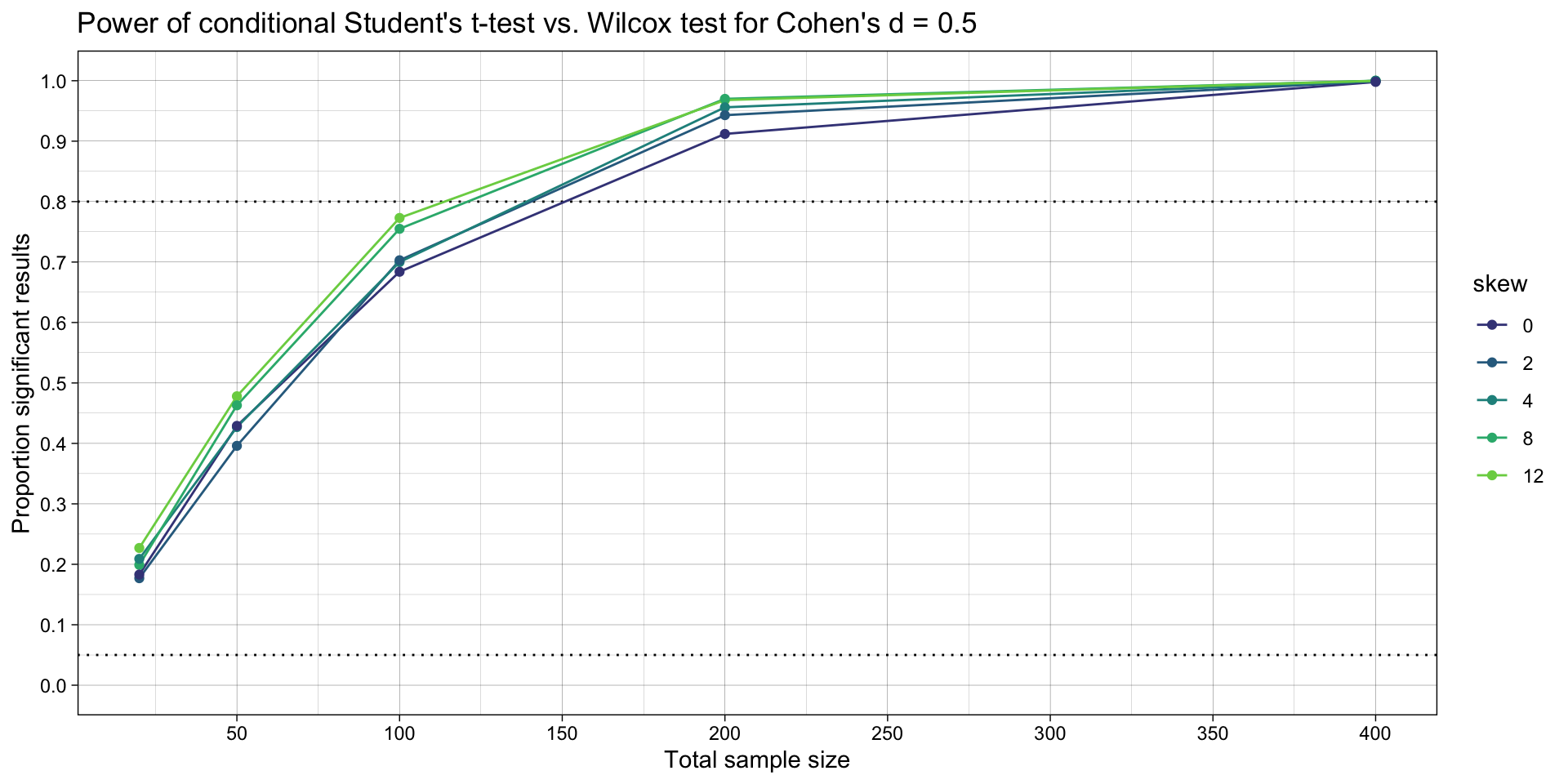

Modify the code below to implement condition logic between how the tests are interpreted.

I.e., for each iteration, calculate a conditional p value: if either of the Shapiro-Wilks tests are significant (evidence of non-normality), use the p value from the Wilcox test; if not, use the Student’s t test’s p value.

Summarize the power of the conditional p value vs. the Wilcox’s test’s p value. This compares the workflows where we a) use Shapiro-Wilk’s tests to choose which method to use to test the hypothesis vs. b) just use the non-parametric test by default.

# summarise simulation results over the iterations ----

simulation_summary <- simulation_normality |>

unnest(analysis_results_students_t) |>

unnest(analysis_results_wilcox) |>

unnest(analysis_results_normality) |>

# choose the lower of the two shapiro wilk's tests' p values

mutate(assumption_test_p_sharpirowilks_lower = ifelse(assumption_test_p_sharpirowilks_intervention < assumption_test_p_sharpirowilks_control,

assumption_test_p_sharpirowilks_intervention,

assumption_test_p_sharpirowilks_control)) |>

# condition logic

mutate(hypothesis_p_conditional = ifelse(assumption_test_p_sharpirowilks_lower < .05,

hypothesis_test_p_wilcox,

hypothesis_test_p_students_t)) |>

# summarize

mutate(skew = as.factor(skew)) |>

group_by(n,

location_control,

location_intervention,

scale,

skew) |>

summarize(proportion_significant_conditional = mean(hypothesis_p_conditional < .05),

proportion_significant_wilcox = mean(hypothesis_test_p_wilcox < .05),

.groups = "drop") |>

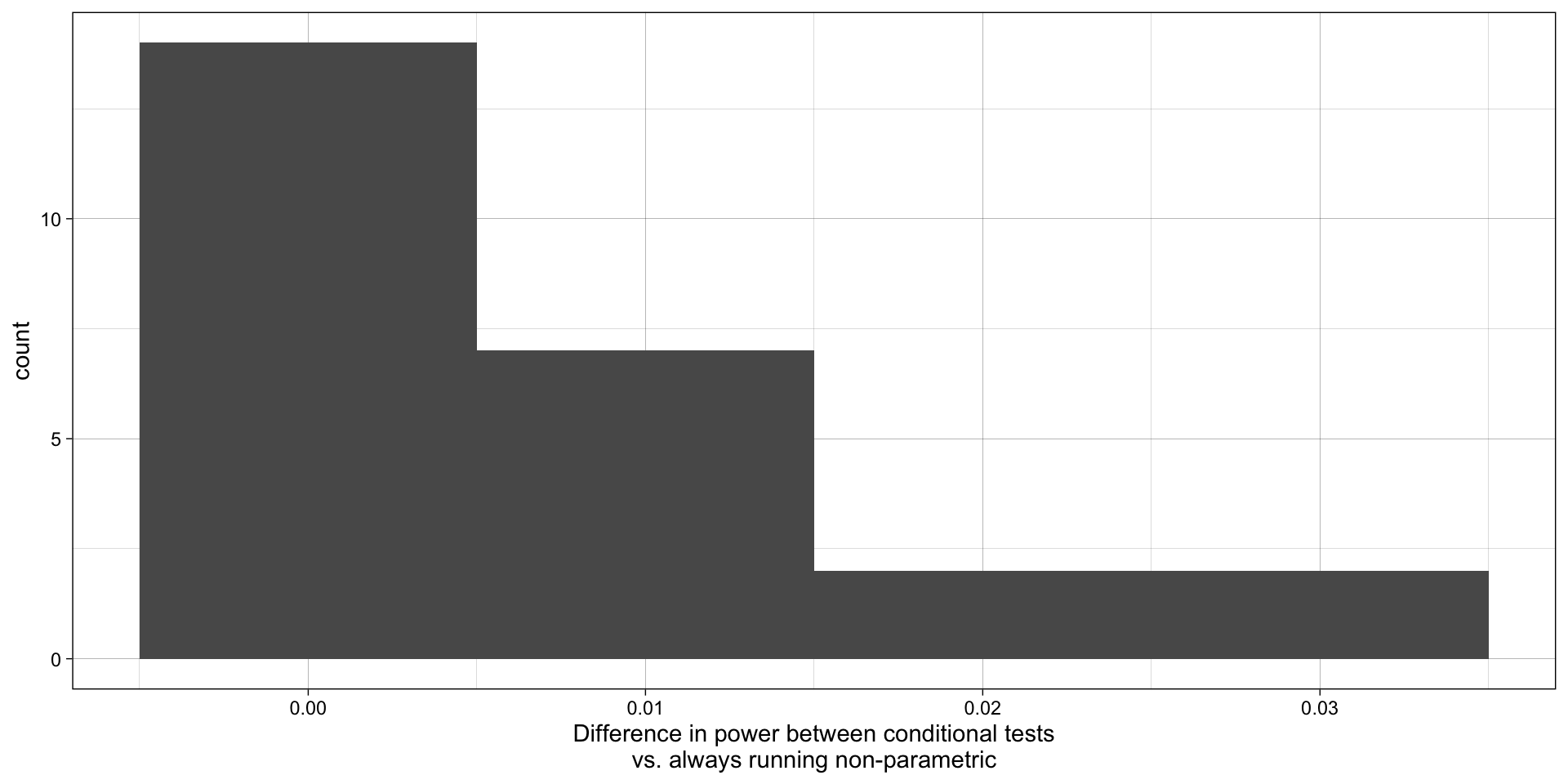

mutate(power_diff = proportion_significant_conditional - proportion_significant_wilcox)This stimulation tests normality in both conditions’ data, as well as testing for differences in the central tendency using both parametric and non-parametric tests. Which test of the differences in central tendency is used for each simulated data set is determined by whether the assumption of normality is detectably violated.

# summarise simulation results over the iterations ----

simulation_summary <- simulation_normality |>

unnest(analysis_results_students_t) |>

unnest(analysis_results_wilcox) |>

unnest(analysis_results_normality) |>

# choose the lower of the two shapiro wilk's tests' p values

mutate(assumption_test_p_sharpirowilks_lower = ifelse(assumption_test_p_sharpirowilks_intervention < assumption_test_p_sharpirowilks_control,

assumption_test_p_sharpirowilks_intervention,

assumption_test_p_sharpirowilks_control)) |>

# condition logic

mutate(hypothesis_p_conditional = ifelse(assumption_test_p_sharpirowilks_lower < .05,

hypothesis_test_p_wilcox,

hypothesis_test_p_students_t)) |>

# summarize

mutate(skew = as.factor(skew)) |>

group_by(n,

location_control,

location_intervention,

scale,

skew) |>

summarize(proportion_significant_conditional = mean(hypothesis_p_conditional < .05),

proportion_significant_wilcox = mean(hypothesis_test_p_wilcox < .05),

.groups = "drop") |>

mutate(power_diff = proportion_significant_conditional - proportion_significant_wilcox)

# plots

ggplot(simulation_summary, aes(n*2, proportion_significant_conditional, color = skew)) +

geom_hline(yintercept = 0.80, linetype = "dotted") +

geom_hline(yintercept = 0.05, linetype = "dotted") +

geom_line() +

geom_point() +

scale_x_continuous(breaks = breaks_pretty(n = 9), name = "Total sample size") +

scale_y_continuous(limits = c(0, 1), breaks = breaks_pretty(n = 9), name = "Proportion significant results") +

theme_linedraw() +

scale_color_viridis_d(begin = 0.2, end = 0.8) +

ggtitle("Power of conditional Student's t-test vs. Wilcox test for Cohen's d = 0.5")

# ggsave("plots/conditional.png",

# width = 7,

# height = 4)

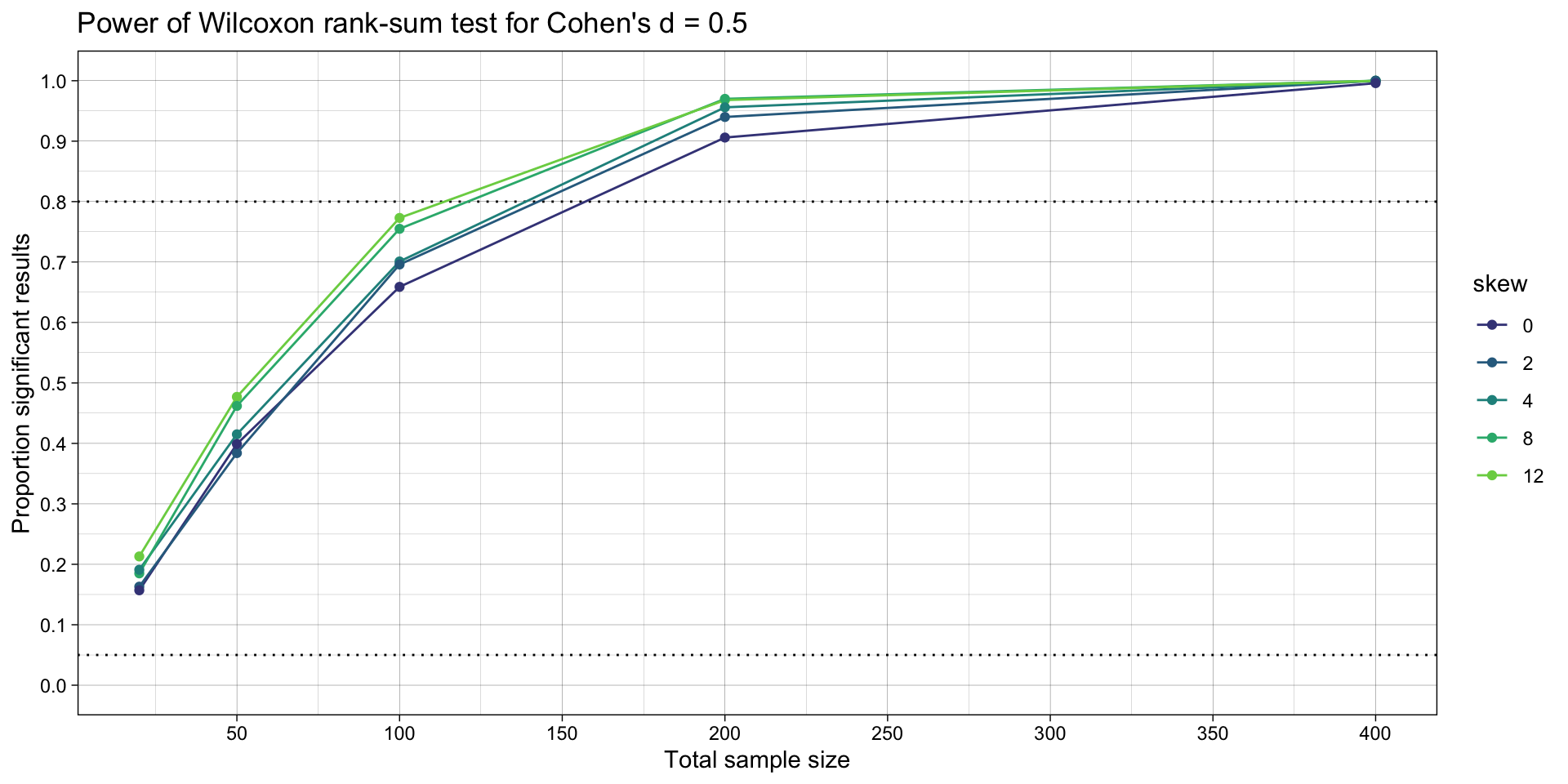

ggplot(simulation_summary, aes(n*2, proportion_significant_wilcox, color = skew)) +

geom_hline(yintercept = 0.80, linetype = "dotted") +

geom_hline(yintercept = 0.05, linetype = "dotted") +

geom_line() +

geom_point() +

scale_x_continuous(breaks = breaks_pretty(n = 9), name = "Total sample size") +

scale_y_continuous(limits = c(0, 1), breaks = breaks_pretty(n = 9), name = "Proportion significant results") +

theme_linedraw() +

scale_color_viridis_d(begin = 0.2, end = 0.8) +

ggtitle("Power of Wilcoxon rank-sum test for Cohen's d = 0.5")

# ggsave("plots/nonparametric.png",

# width = 7,

# height = 4)

ggplot(simulation_summary, aes(power_diff)) +

geom_histogram(binwidth = 0.01) +

theme_linedraw() +

xlab("Difference in power between conditional tests\nvs. always running non-parametric")

What does this tell us about whether we should (a) test the assumption of normality and then run a (non)parameteric test conditionally or just run the non-parametric test by default?

What interpretation issues might be an argument against doing this by default?

Are you starting to see why statisticians usually reply with “it depends” when we ask them questions?

What did you learn here?

One relatively simpler extension would be to assess how much better or worse just using the Student’s t test by default is compared to a) using Student’s t-test vs. Wilcox conditionally based on the Shapiro Wilks tests or b) using the Wilcox test by default. I.e., try adding c) using Student’s t-test by default.

Right now the simulation only examines a fixed effect size. Perhaps the supposed negative impact of violating normality would be seen at other effect sizes? Vary this or indeed other things to make an informed decision about how to choose which test(s) to run to make the inference about differences in means between conditions.

Decisions about which hypothesis test is used are also made on the basis of other tests of those assumptions. For example, heteroscedasticity (equal SDs) can be tested using leveneTest(). This has lower power than Bartlett’s test but does not assume normality.

Write a simulation that is analogous to the one above, but which compares the statistical power of a) the conditional use of Student’s t test or Wilcox test based on the result of Levene’s test vs. b) using Wilcox test by default.

For simplicity:

n = c(10, 25, 50, 100, 200)).